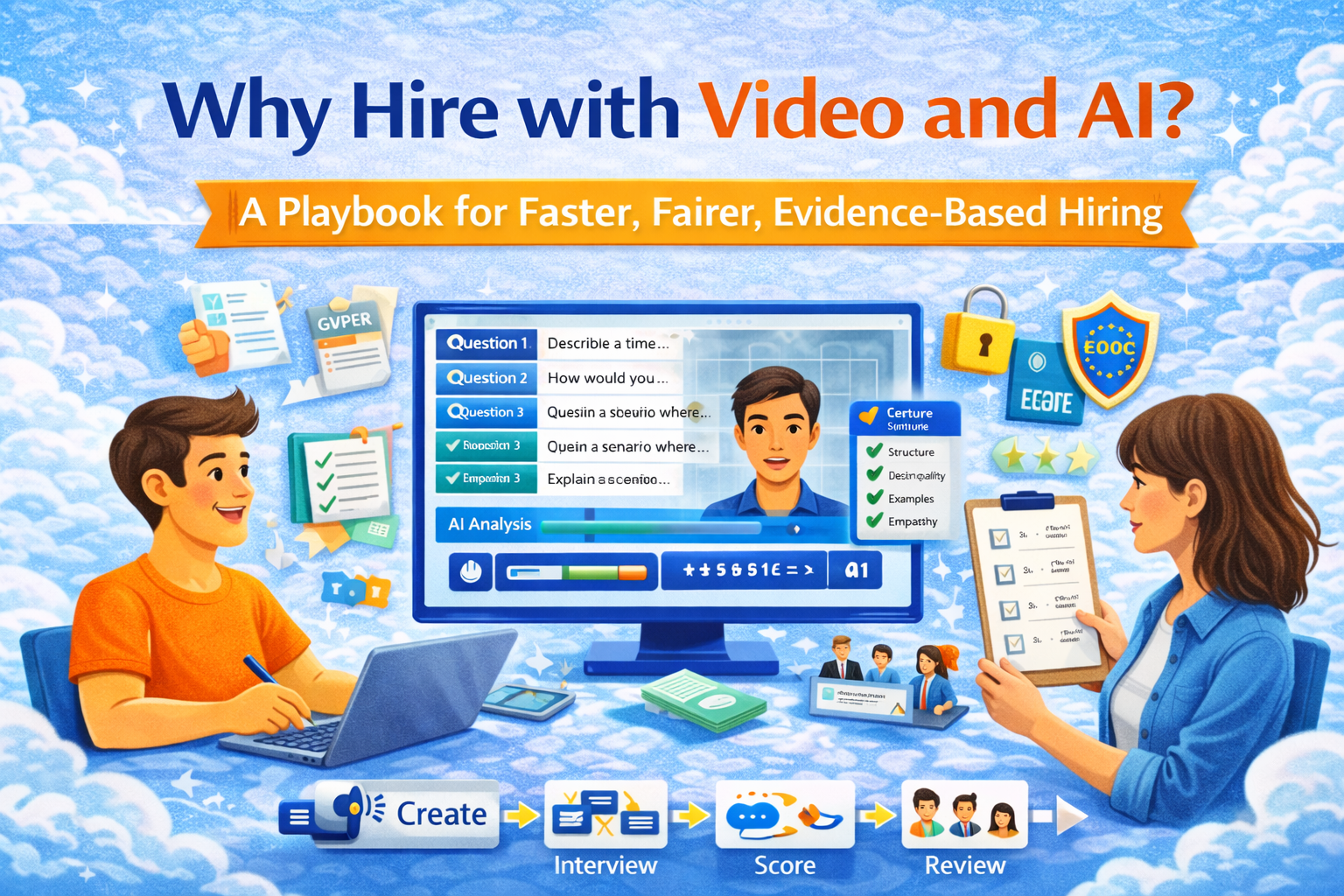

Why hire with video and AI in the first place?

Hiring usually fails for boring reasons: weak signal, inconsistent evaluation, and “vibes” winning over evidence. Video + AI can fix some of that—when you use it like a measuring tool, not a crystal ball.

What “video + AI” means in practice

- Video: candidates answer structured questions on video (live or one-way/asynchronous).

- AI: helps with workflow (scheduling, summarizing, note-taking, tagging competencies) and sometimes evaluation (scoring answers, ranking, flagging gaps).

The sweet spot: AI reduces admin + enforces structure while humans keep accountability for final decisions.

Where it actually helps (real use cases)

1) High-volume screening without calendar chaos

One-way interviews let candidates respond on their schedule; recruiters review only the shortlist. This is especially useful for support, sales, ops, junior roles.

2) Skills evidence, not CV astrology

A well-designed question set makes candidates show competence (reasoning, communication, problem solving) instead of “I led a cross-functional initiative” poetry.

3) Standardization (aka fairness through consistency)

Everyone gets the same questions, same time limits, same rubric. That doesn’t magically remove bias—but it reduces random interviewer variance, which is often worse.

4) Better candidate experience if you do it like a grown-up

Candidates hate black boxes. They tolerate automation when you:

- explain what’s happening,

- keep the time reasonable,

- and give feedback on next steps.

The part most teams ignore: the risk map (bias, privacy, accessibility)

If you use AI in hiring, assume someone will eventually ask:

“Can you prove this process is fair, explainable, and lawful?”

Bias & discrimination risk

US regulators explicitly warn that automated tools can produce discriminatory outcomes, including tools used in hiring and screening.

High-risk pattern to avoid: tools that claim to infer personality, honesty, or “culture fit” from facial expressions, voice, accent, or micro-signals. Even if the vendor swears it works, you’ll struggle to defend it.

Transparency & notice requirements

If you hire for NYC roles and use automated employment decision tools, Local Law 144 requires bias audits and candidate notices (plus public posting of audit summaries).

Data protection & automated decision-making rules

In the UK/EU context, automated decision-making and profiling triggers extra obligations and individual rights. The UK ICO guidance is a good baseline for what “responsible” looks like.

EU AI Act: recruitment is explicitly “high-risk”

The EU AI Act treats certain employment/recruitment AI systems as high-risk, which implies stronger obligations (risk management, documentation, governance, etc.).

Bottom line

If your implementation can’t answer these three questions, it’s not ready:

- What exactly is AI doing? (assist vs decide)

- How do we test for disparate impact / unfairness?

- What’s our retention, consent/notice, and appeal process?

A practical playbook: how to implement video + AI without making it weird

Step 1: Decide what AI is allowed to do

Use this simple tiering:

Tier A — Safe + common

- scheduling automation

- interview transcription / summarization

- rubric assistance (“does this answer cover criteria X/Y/Z?”)

- duplicate detection, note cleanup

Tier B — Conditional

- scoring answers only against a transparent rubric

- ranking candidates with human review

- anomaly flags (“missing required example”, “didn’t answer question 2”)

Tier C — Don’t (unless you want pain)

- facial emotion scoring

- “personality” inference from voice/face

- opaque overall “hire/no hire” decisions

Step 2: Make the interview structured (this is the real secret)

Pick 4–6 questions max for screening. Total candidate time: 10–20 minutes.

Example (Customer Support role):

- “Explain a time you handled an angry customer. What did you do, step by step?”

- “Here’s a scenario: customer says the product is broken. What’s your first response?”

- “Teach me something simple in 60 seconds.” (tests clarity)

Step 3: Use a rubric that a stranger can apply

Score each question 0–4:

- 4: clear structure, specific example, correct decision logic, empathy

- 3: solid answer, minor gaps

- 2: generic, lacks detail, partially correct

- 1: confused, missing key steps

- 0: no answer / irrelevant

Rule: You can use AI to draft scores, but a human must confirm.

Step 4: Build candidate trust (aka “don’t be shady”)

Your invite message should say:

- interview format (one-way vs live)

- time estimate

- whether AI is used and how

- how decisions are made and who reviews

- accommodation path (accessibility needs)

This isn’t just “nice”—it reduces disputes and improves completion rates.

Step 5: Audit the process like a product

Even if not legally required, do a lightweight audit:

- completion rate by device type/timezone

- drop-off at each question

- score distribution by interviewer vs AI suggestions

- false negatives (great hires you rejected) via backtesting

For risk management structure, NIST AI RMF is a strong framework reference.

Where SubSchool fits (and why it’s actually useful here)

Most hiring tools start at “screen candidates” and stop. That’s lazy.

With SubSchool, you can run EduHire as a learning + assessment pipeline:

- candidates complete a short course module (role context + micro tasks),

- then submit video interview tasks inside the course,

- your team evaluates with a shared rubric,

- and you keep an audit trail of what was assessed and why.

Also, for internal roles or upskilling:

- your team can create onboarding/training fast (even by uploading a batch of videos),

- and attach consistent homework/tasks so people aren’t “trained” by Slack rumors.

Templates you can copy-paste

1) Candidate notice (plain language)

You’ll complete a short video interview (about 15 minutes).

Your responses will be reviewed by our hiring team. We may use automation to help summarize answers and apply a consistent scoring rubric. Final decisions are made by humans.

If you need an accommodation, reply to this email and we’ll provide an alternative format.

2) Question design checklist

- tests a real job behavior (not trivia)

- answerable without insider info

- has “what good looks like” in 3–5 bullet points

- avoids protected-class proxies (accent/appearance assumptions)

3) Rubric snippet (paste into your hiring doc)

For each answer, score:

- clarity/structure (0–4)

- correctness/decision quality (0–4)

- evidence/examples (0–4)

- communication & empathy (0–4)

Resources

- EEOC — Employment Discrimination and AI (PDF)

- NYC DCWP — Automated Employment Decision Tools (Local Law 144)

- NIST — AI Risk Management Framework 1.0 (PDF)

- UK ICO — Automated decision-making and profiling (UK GDPR guidance)

- UK Government (DSIT) — Responsible AI in Recruitment

- EU AI Act — Regulation (EU) 2024/1689 (EUR-Lex PDF)